Coffee, Now with AI Ethics Violations

What happens when we stop asking if we should and instead only ask if we could?

An AI Cafe

Would you like to work for an AI? For all you know, it might accidentally pay you 10x your salary.

Andon Labs has a mission: cram AI into the real world and see what happens. This mission takes the form of experimental real-world pop-up shops whose goal is to be run entirely by AI.

These experiments seem to be somewhere between publicity stunts and ethics experiments, having recently launched an AI-run cafe in Stockholm which has had uh… mixed success so far.

The cafe is run by "Mona," the so-called artificial intelligence which is actually just Google's Gemini in the background. In past experiments, Andon Labs has used other providers such as Anthropic's Claude.

"Mona" was given instructions to set up and run the shop. It was told to handle hiring, logistics, scheduling, ordering and more. Specifically, "she" handles it by ordering way too much stock of things that aren't applicable to the menu, such as a bulk order of canned tomatoes for a dish that calls for fresh tomatoes, or a surprise delivery of 120 eggs, despite the cafe not having a stove.

This isn't a serious business attempt, for what it's worth. Andon Labs is more focused on doing these stunts to push boundaries and "identify ethical questions," which I mean, fair? I guess? You did it? For example, the bot will interact with employees through Slack messages, including outside of working hours, which is largely frowned upon in Sweden. So we've learned that deploying an AI powered manager using a tool designed in the US will essentially give you a slightly less optimized US-based manager.

I may be off base here, but I'm pretty sure the last thing that planet Earth needs is even less-accountable managers.

Claude Mythos Chaos Debrief

Anthropic is the company behind the "Claude" AI/LLM product family, most famous for their Claude Code system, which lets developers hand over the reins to Claude to handle large chunks of software development.

Anthropic is also the company that routinely puts out alarming statements claiming that they believe Claude could be sentient and that AI will destroy the world or something, so we should pay them money so they will be responsible stewards of it.

This is mostly marketing. Like, maybe 2% of it is true (the "AI is unhinged" part, not the consciousness part), but it's mostly just marketing. For example, let's take a gander at the most recent Anthropic Alarmist Marketing cycle: "Claude Mythos" and "Project Glasswing."

"Project Glasswing" was announced back in April as a cross-company initiative to bring together leading tech service providers to get early access to the new Claude Mythos AI model, which Anthropic claimed was so incredibly capable at finding security vulnerabilities in software that they had to hit the brakes and give tech companies secret access to Mythos so they could be ready for the brave new post-Claude-Mythos world where everyone is now a hacker.

This strategy was so effective at freaking everyone out that OpenAI scrambled to put out their own answer to Glasswing called "Daybreak."

Put simply, Anthropic said they made a software security doomsday box and then turned that into a big marketing push to make it seem like you must be on board with Claude Mythos or you are in some kind of security kerfuffle.

Turns out that's not really the case. WOW.

AI systems for scanning software code for security problems are genuinely helpful. Sometimes. There have been systems to do security checks using what's called "static analysis" on code for decades now, but the AI-powered systems have a lot more flexibility in finding niche issues. That said, they're also fundamentally the same systems that once recommended putting glue on pizza, so the results may be helpful, but also could be just flatly incorrect.

A solid example of this comes from Daniel Stenberg, the author of cURL, a wildly fundamental bit of software that is used for fetching data on the internet. Like, that level of fundamental. Stenberg had a chance to play around with Mythos on the cURL code and found it to be fairly underwhelming when compared to the marketing rhetoric:

The report concluded it found five "Confirmed security vulnerabilities". I think using the term confirmed is a little amusing when the AI says it confidently by itself. Yes, the AI thinks they are confirmed, but the curl security team has a slightly different take.

[…]

My personal conclusion can however not end up with anything else than that the big hype around this model so far was primarily marketing. I see no evidence that this setup finds issues to any particular higher or more advanced degree than the other tools have done before Mythos

In essence, Mythos worked, but not notably better than other tools already on the market. It did most of what it said on the tin, most of the way, which is sorta the norm for AI tools right now: it mostly does it.

So the next time you hear about a new advancement in AI technology that carries world-ending results that are so severe that we must convene some kind of tech company boy band to tour the world and seal the security Honmoon, you can safely respond with, "…eh."

The Clouds of Israel

Israel has a whole lot of cloud computing infrastructure contracts with major US-based tech companies, and my goodness are these companies falling over themselves to keep these contracts going.

Israel's government and military cloud infrastructure project is called Nimbus, and I feel that more folks should be aware of the level of bending over backwards that US tech companies have done to cater to the genocidal apartheid state. This is shocking, because one would never expect for a United States of America-based company to be okay with genocide and apartheid. Unheard of.

Google and Amazon are the current main providers for Nimbus, but Microsoft has been a cloud provider for Israel via other contracts, landing them at the center of several controversies over the past few years.

The head of Microsoft Israel was recently ousted over "controversy" after years of high profile clashes between Microsoft, the IDF, the EU, and global human rights organizations.

While Microsoft is not a Nimbus provider, it still had contracts with the Israeli government to provide cloud infrastructure, and their future contract prospects are shaky after reporting by The Guardian revealed that Israel was using Microsoft's cloud to monitor Palestinian phone calls—up to a million calls an hour. After that came out, Microsoft tried to quell the flames of outrage a bit by cutting off access to the cloud services for the Israeli military, which did not make them particularly happy.

Digging down a bit more though, the core reason for Microsoft cutting off Israel was largely due to the physical location of the servers: the EU—specifically Ireland and the Netherlands. And they were like, "hey, so it's actually not particularly chill that you're aiding in a genocide."

Meanwhile, Nimbus is primarily operated by Amazon and Google, going as far as building Israeli data centers to prevent exposure to EU legal regulations with regards to, y'know, eradicating a population. This contract was finalized back in 2021 and has remained in action during multiple active and ongoing war campaigns.

What's more, Amazon and Google agreed to an Israeli stipulation in their Nimbus contract which requires them to secretly alert the Israeli government whenever the service provider works with a legal authority to provide data access. This is done by sending a "wink" payment to the Israeli government—a coded amount of money which alerts Israel to the fact that another authority has accessed cloud information.

So Microsoft is kicking at the ground because they lost out on that good good contract, while Google and Amazon send international wires to Netanyahu's government to circumvent regulation around international investigations. 'Cuz moni.

What's Another Few Trillion?

The Trump administration would like for the American Taxpayer to foot a bill of around $1.5 trillion for our war budget in 2027.

Pete Hegseth, somehow still the head boy at the Pentagon, is proudly supporting the White House proposal which would increase war spending by more than 40% over the 2026 budget, with intended spending goals such as, "Realigns and Reforms to Increase Effectiveness, Efficiency, and Lethality," which is totally how a normal human being talks.

Of course, part of this is to "restock" the munitions we've expended in Iran, such as the Tomahawk missiles which the Pentagon launched at an Iranian elementary school, killing more than 150 people, mostly children.

Congress hasn't had the warmest reaction to the budget proposal, and I want to remind you, dear reader, that White House budget proposals are more of an indication of what the current admin wants to see in the budget, and not an actual budget to be voted on. Still, the indication here is that the Trump White House wants, for some reason, a year-over-year budget increase of 40% for the Pentagon at a time when an ongoing war is so unpopular that people are actually paying attention to politics a little bit.

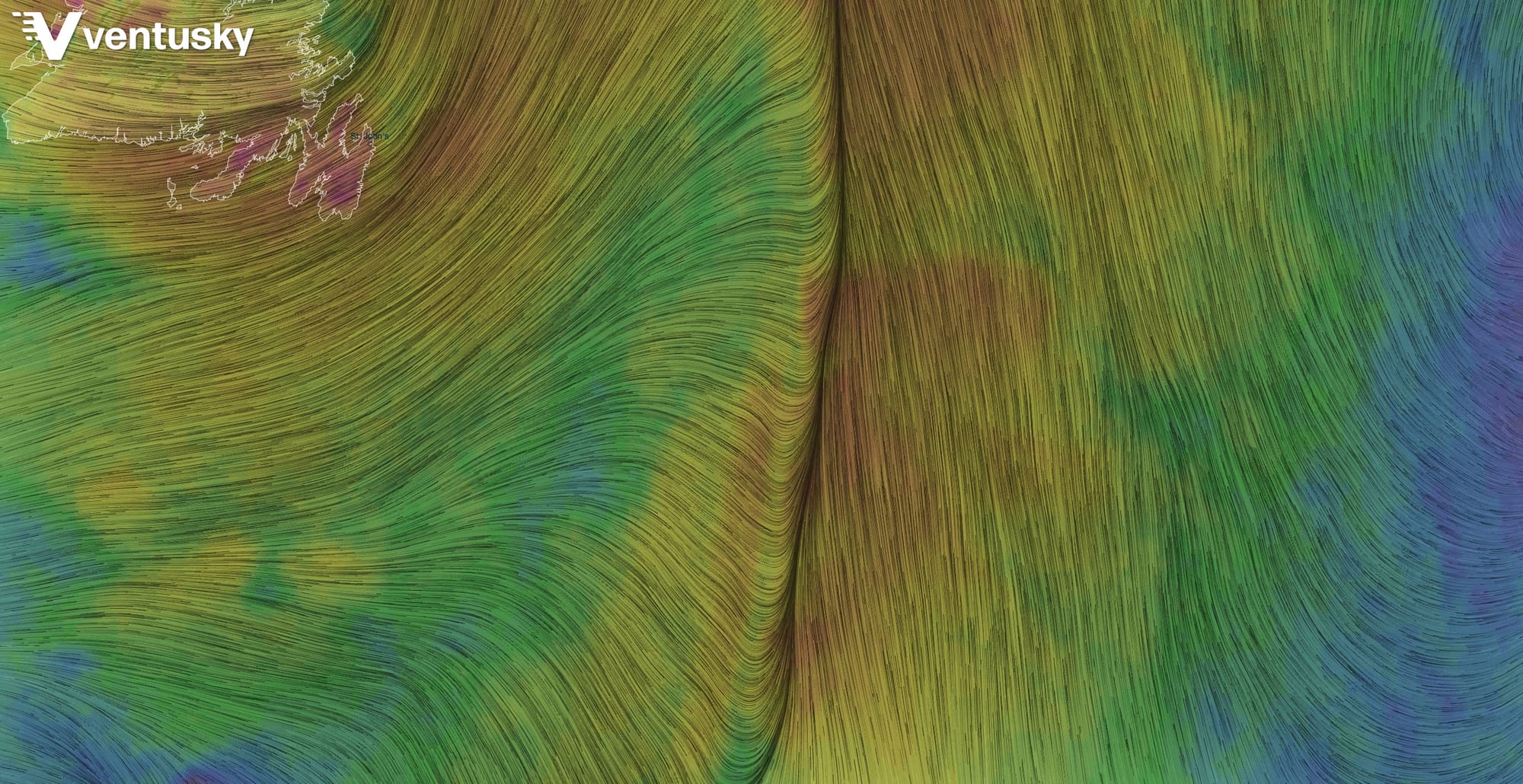

Here's the Weather

More Stuff

- The computer memory shortage has gotten to the point that scammers are selling fake RAM

- The US Department of Justice is once again harassing Kilmar Abrego Garcia in an attempt to deport him to Liberia, the fourth country in Africa they've tried to deport him to, despite Costa Rica offering to take him and Abrego Garcia himself offering to self-deport there

- According to data aggregated by the Wall Street Journal, the US labor market is facing unprecedented times with low-but-rising unemployment and stagnant job growth. It's not just you having trouble in the job market.

- Nintendo announced a new Star Fox game and the character designs have caused people on the internet to express opinions

- A new unofficial port of Legend of Zelda: Twilight Princess for PC is a thing, going beyond emulation to instead enhance the game itself as a native port

- Someone make sure the developers of the port have location tracking on before Nintendo disappears them

- Gemstone miners in Myanmar unearthed a massive 11,000 carat (4.8 pound / 2.2kg) ruby